Every Binary, Understood.

Open-source plugins for Ghidra, Binary Ninja, and IDA Pro that bring LLM reasoning, autonomous agents, and semantic knowledge graphs directly into your analysis workflow.

Stop Context-Switching. Start Understanding.

Reverse engineering is slow because the tools don't think with you. You copy pseudocode into a chat window, wait for a response, then manually apply the results back. You rename one function at a time. You build mental models of call graphs that evaporate between sessions.

Our plugins eliminate that friction. Ask questions about code without leaving your disassembler. Get function explanations, rename suggestions, and vulnerability assessments in seconds. Build a persistent knowledge graph that captures what every function does, how they relate, and where the risks are — so your analysis compounds instead of starting over.

One Plugin for Your Disassembler

Same capabilities, native to every major RE platform. Install, configure an LLM provider, and start analyzing.

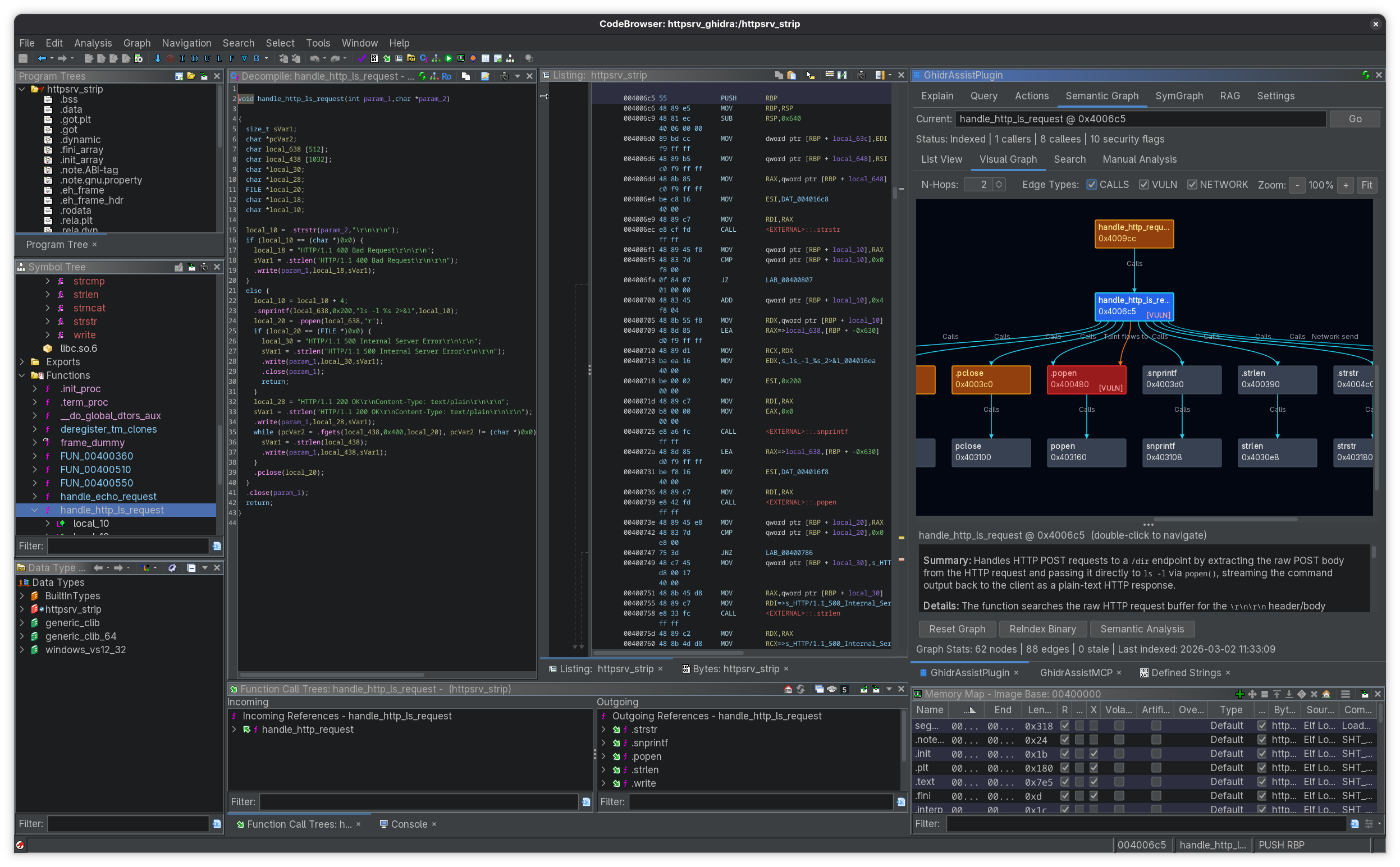

GhidrAssist

A seven-tab sidebar that turns Ghidra into an AI workbench. Select a function, get a plain-English explanation with security risk flags. Ask follow-up questions with context macros that auto-inject decompiled code, cross-references, or call graphs. Let the ReAct agent autonomously trace data flows across dozens of functions while you watch.

- One-click function explanation with security scoring

- Interactive chat with @function, @xrefs, @callgraph macros

- Autonomous ReAct agent for multi-step investigations

- Semantic graph: index every function, search by behavior

- RAG: upload specs, RFCs, or vendor docs for context

- Batch actions: rename, retype, and create structs from AI suggestions

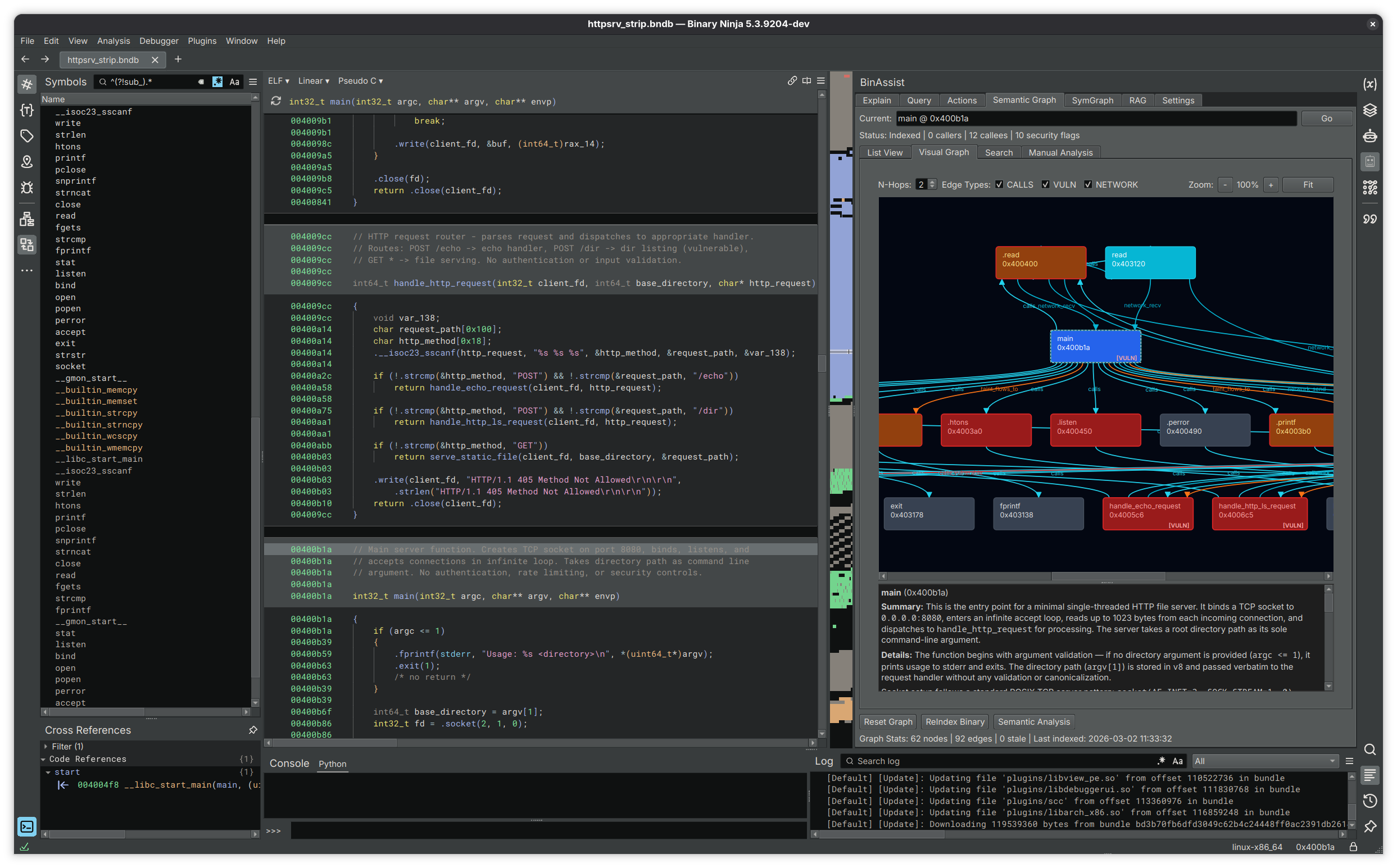

BinAssist

A native Binary Ninja sidebar built on Qt that streams LLM responses in real time. Navigate to any function and get instant analysis. The Actions tab generates rename and retype suggestions with confidence scores — apply them individually or in bulk. Extended thinking mode lets you dial up reasoning depth for complex vulnerability research.

- Streaming responses in a dockable sidebar panel

- Confidence-scored rename and retype suggestions

- ReAct agent with tool calling for deep investigation

- Knowledge graph with taint flow and community detection

- Extended thinking: four reasoning effort levels

- Works with cloud APIs, local Ollama, or your own proxy

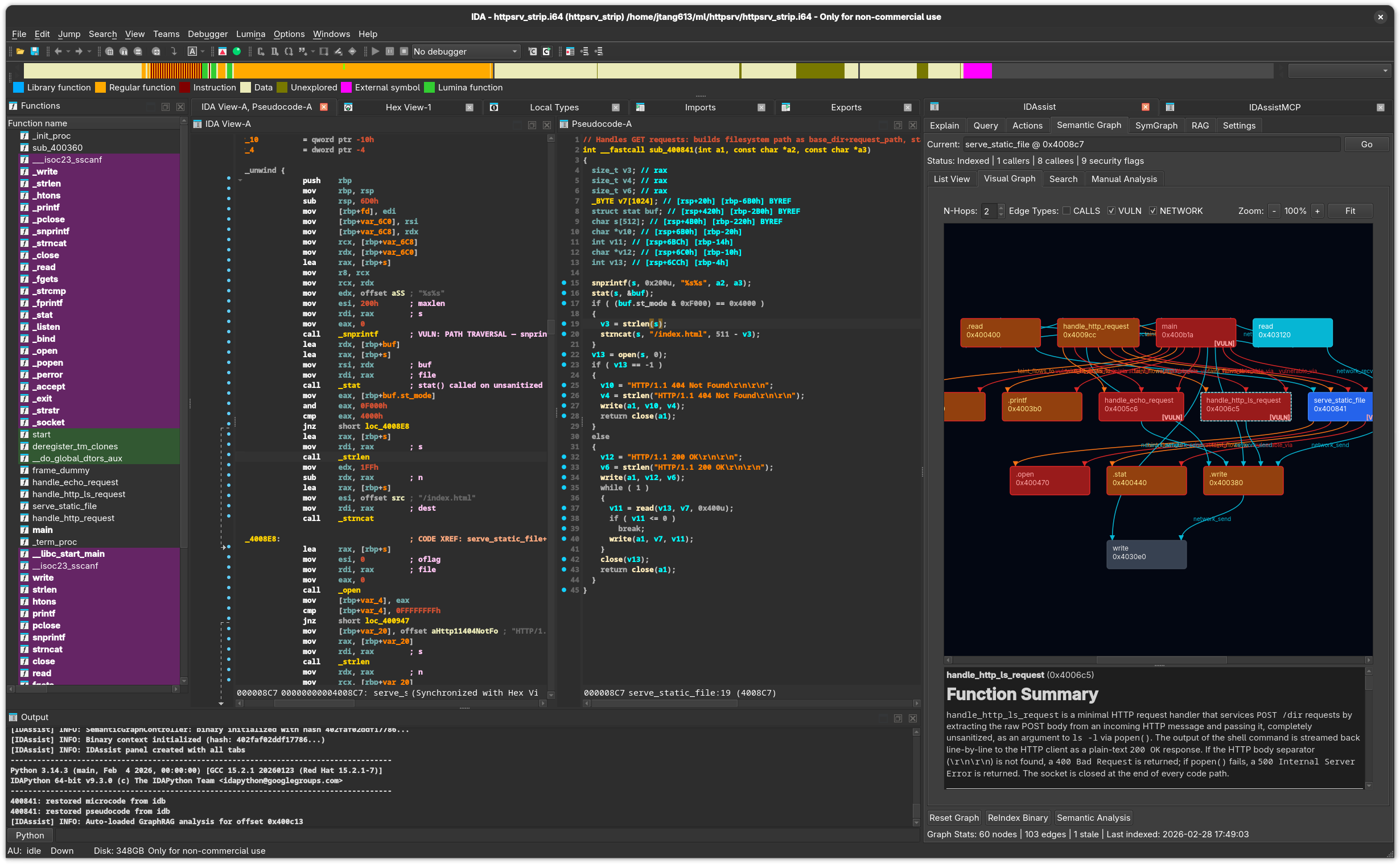

IDAssist

A dockable panel for IDA Pro 9.x that brings the same AI workflow to Hex-Rays users. Visual graph exploration renders function relationships with Graphviz layouts. Community detection automatically groups related functions into logical modules, giving you a high-level map of unfamiliar binaries in minutes instead of days.

- Native IDA Pro 9.x dockable panel

- Visual knowledge graph with Graphviz rendering

- Community detection for automatic module identification

- Confidence-scored suggestions for names, types, and structs

- Taint and network flow analysis across functions

- All analysis stored locally in SQLite — your data stays yours

A Workflow That Compounds

Each step builds on the last. By the end, you have a searchable, annotated map of the entire binary.

Explain Functions

Select a function, click Explain. The LLM reads the decompiled code and produces a summary, purpose description, and security risk assessment. Explanations are stored persistently so you never re-analyze the same function twice.

Investigate with Autonomous Agents

Ask a question like "trace user input through this binary" and the ReAct agent takes over. It plans an investigation, calls MCP tools to read functions, follow cross-references, and navigate the call graph — then synthesizes a comprehensive answer across dozens of functions, all without you clicking a thing.

Apply Rename Suggestions

The Actions tab generates batch rename, retype, and struct creation suggestions for every function and variable in scope. Each suggestion comes with a confidence score. Review the list, accept what looks right, and move on.

Build the Knowledge Graph

Index the entire binary into a semantic graph. Every function gets a summary, security flags, and call relationships. Search by behavior ("which functions parse network input?"), visualize clusters, and trace taint flows — all without leaving your disassembler.

MCP Servers: Let AI Drive Your Disassembler

Each plugin has a companion MCP server that exposes your disassembler's full API to external AI clients. Connect Claude Desktop, Cursor, or any MCP-compatible tool and let the LLM navigate functions, read decompiled code, set comments, and rename symbols — all programmatically.

GhidrAssistMCP

Runs inside Ghidra as an extension. Shares a single server across all CodeBrowser windows with automatic focus tracking, so the AI always knows which binary you're looking at.

BinAssistMCP

Supports concurrent analysis of multiple binaries with intelligent session management. Includes guided workflow prompts for vulnerability research, protocol analysis, and documentation generation.

IDAssistMCP

Standalone server for IDA Pro 9.x with thread-safe database modifications. Five consolidated tools use action parameters for a clean, predictable API surface that LLMs can reason about reliably.

Why Analysts Choose These Plugins

Built by reverse engineers, for reverse engineers. Every feature exists because we needed it ourselves.

Analysis That Persists

Explanations, security flags, and graph data are stored in local databases. Close the binary, reopen it weeks later, and everything is still there. Your analysis compounds over time instead of disappearing with each session.

Use Any LLM You Want

Every plugin supports Anthropic, OpenAI, Ollama, LM Studio, LiteLLM, and OAuth-based subscriptions. Run a local model for air-gapped work. Use Claude or GPT for maximum quality. Switch between them without changing your workflow.

Search by Behavior, Not by Name

The semantic graph lets you query functions by what they do: "which functions handle user input?", "show me crypto operations", "find network parsers." No more scrolling through thousands of sub_* stubs hoping to find the right one.

Open Source and Extensible

All plugins and MCP servers are open source. Add your own MCP tools, connect external servers, upload custom RAG documents, or modify the system prompt. The architecture is designed to get out of your way.